Agentic AI Needs Verifiable Records to Be Trusted

Agentic AI is moving fast.

Unlike earlier automation, agentic systems do not just respond to prompts or follow static rules. They initiate actions, make decisions, and execute workflows on someone else’s behalf. In real terms, that means AI agents are submitting forms, approving changes, triggering transactions, and interacting with regulated systems.

As that happens, a new question becomes unavoidable: How do you prove what an AI agent did, when it did it, and under whose authority?

Logs and explanations are not enough. If agentic AI is going to operate in real world workflows, it needs verifiable records.

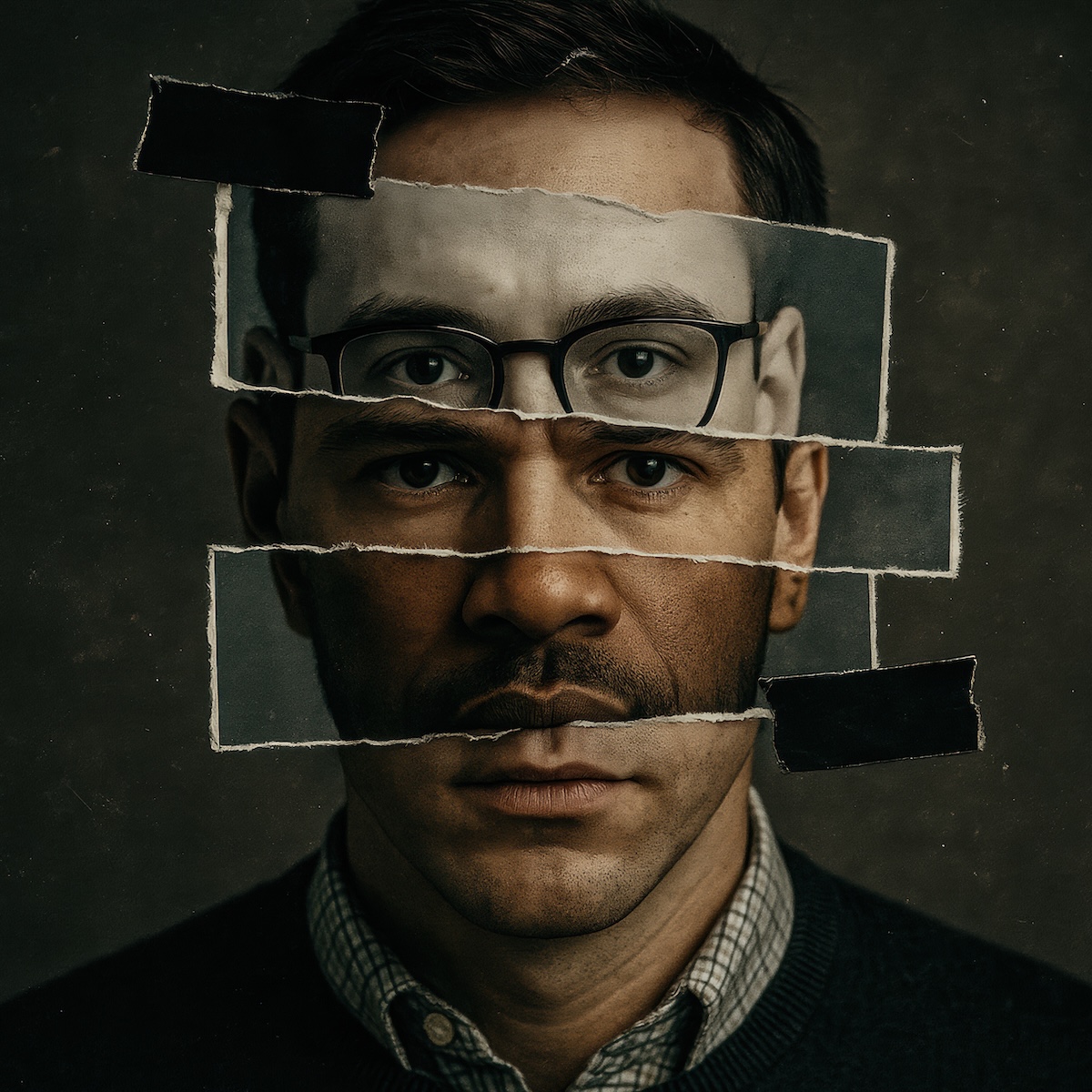

Agentic AI introduces delegated authority, not just automation

Traditional automation executes predefined instructions. Agentic AI exercises judgment within a delegated scope.

That distinction matters because accountability changes the moment a system is allowed to decide, not just act. An AI agent is almost always operating on behalf of a business, an employee, or a customer. That delegation of authority must be provable.

When an AI agent submits a legally binding document, updates account information, or initiates a high risk transaction, the questions that follow are predictable:

- Who authorized this

- What exactly happened

- Can you prove it has not been altered

Without durable evidence, organizations are left trying to reconstruct intent after the fact. That is a fragile position, especially in regulated or high trust environments.

The identity layer most protocols ignore

The agentic AI ecosystem is moving fast to build execution infrastructure. OpenAI's Agent Protocol, Google's A2A, Anthropic's MCP, Visa's Trusted Agent spec, Mastercard's Agent Pay: each new release adds another layer of operational structure designed to make agent transactions faster, smoother, and more interoperable.

What they do not add is an answer to the most fundamental question: was there a verified human behind this action?

These protocols assume identity. They pass tokens, scopes, and capabilities, but none of that constitutes proof that a verified human authorized the action. Binding an agent's action to a real, identified human whose specific intent for that transaction has been captured and cryptographically recorded remains an unsolved problem across every major framework.

The industry is solving for execution before it has solved for identity. Without a way to tie an agent's action to a verified human, and to a specific, scoped, revocable authorization for that exact transaction, every layer of execution infrastructure built on top is structurally incomplete. Without knowing whether an authorization was cryptographically tied to a verified human, or was fabricated, tampered with, or generated by the AI itself, organizations are effectively processing transactions without a verifiable record of who approved them. That introduces a liability gap that often leaves the consumer holding the risk.

Human identity verification at the moment of authorization is the foundation everything else depends on. Until that layer exists, every agentic transaction lacks a verifiable answer to the question that matters most: can anyone prove a real human said yes?n is not whether agents can act. It is whether anyone can prove a real human said yes.

Why logs and model outputs fail as evidence

Most agentic systems rely on application logs, API traces, or model outputs to explain behavior. These tools are useful for debugging, but they were not designed to function as proof.

Logs are mutable and system controlled. They can be edited, truncated, or regenerated. Model outputs show what was generated, not what was authorized or approved. Neither provides cryptographic assurance that a record is complete or untampered.

That gap becomes obvious under external scrutiny. Auditors, regulators, and courts do not ask whether an AI system behaved reasonably. They ask whether its actions can be independently verified.

What makes a record verifiable

A verifiable record is not just stored data. It is an evidence artifact designed for inspection beyond the system that created it.

At a minimum, a verifiable record captures:

- The action that occurred

- The identity or system authorized to act

- When the action took place

- Cryptographic proof that the record has not been altered

The critical difference is independence. A third party should be able to validate the record without trusting the internal logs or explanations of the platform that generated it. That independence is what turns an AI action into something accountable.

Where this matters first in real workflows

The need for verifiable records shows up fastest anywhere AI actions carry legal, financial, or compliance consequences.

Common examples include:

- Identity verification and account recovery

- Contract execution and notarized documents

- Financial services workflows

- Government and regulated transactions

- High risk account or permission changes

In these environments, the burden of proof matters. Organizations are expected to show what happened, not just explain it. Agentic AI without verifiable records forces teams to defend decisions using tools that were never meant to serve as evidence.

Verifiable records make AI actions inspectable

The most important shift verifiable records introduce is inspectability.

When an AI agent produces a cryptographically verifiable record, downstream parties can independently confirm that an action occurred, that it reflects a specific moment in time, and that it has not been modified.

This is especially important when AI actions intersect with human authority. A verifiable record can link an agent’s action to the approval or authorization that enabled it. That linkage is essential for accountability, not just transparency.

Trust in AI comes from evidence, not explanations

Explainability helps people understand AI behavior. Evidence proves it.

As AI systems take on more responsibility, trust will not come from better narratives about why a decision was made. It will come from records that hold up outside the system that generated them.

Verifiable records turn agentic AI from a black box into an accountable participant in real world workflows.

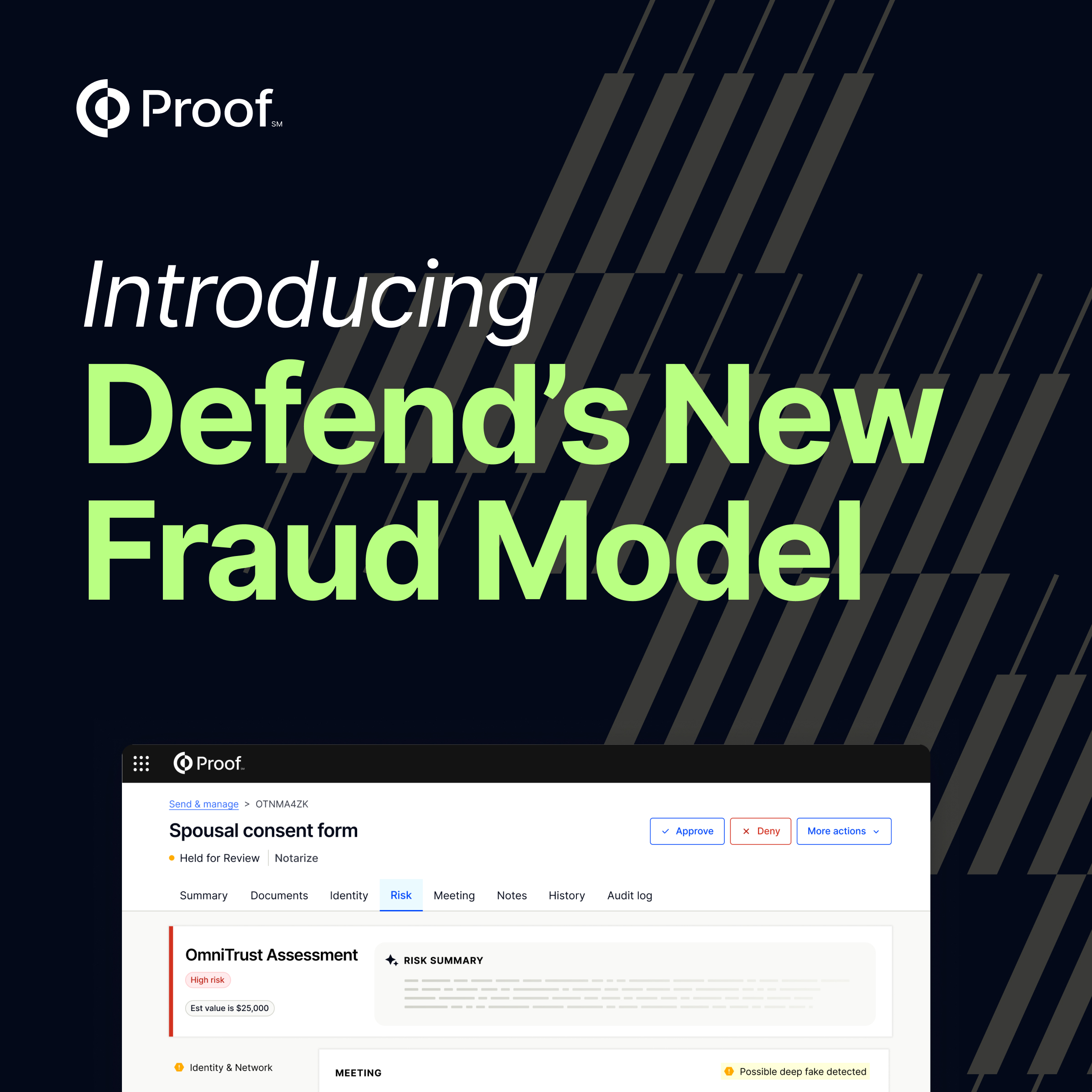

How Proof supports verifiable records for AI driven workflows

Proof helps organizations create tamper evident, cryptographically verifiable records for high trust moments. The Proof platform is designed for workflows where identity, intent, and evidence matter. That foundation makes it well suited for AI assisted and agentic systems that need to stand up to external scrutiny.

With Proof, organizations can produce durable records tied to real identity and authorization, reducing reliance on internal logs as the sole source of truth.

As AI agents take on more responsibility, verifiable records are no longer optional. They are infrastructure.

The future of agentic AI

Agentic AI will continue to evolve. Autonomy will increase, and decisions will carry more weight.

The systems that succeed will be the ones that pair intelligence with accountability. Verifiable records make that possible by turning AI actions into inspectable, defensible events.

If agentic AI is going to operate in the real world, it needs Proof.

Ready to make AI driven actions verifiable? Learn how Proof helps organizations create tamper evident, cryptographically verifiable records for high trust workflows >

.jpg)

.png)

.jpg)